Preview in:

AI technology has extremely evolved over the past few years, and as any other innovation it should correlate with public and government acceptance. That is why it is crucial to provide and adapt regulations that will set up some boundaries and at the same time will not be an obstacle for developers.

What is the EU’s Artificial Intelligence Act?

The European Commission published the first act in 2021, where it explained the basis of AI usage and responsibility for possible upcoming dangers. Beside that, it was emphasized that any AI technology that will (or already) appear in the European Union should be developed with whole responsibility and awareness of social impact and be respectful toward values and rules that endure in Europe.

Over the years the document has been updated several times, and the last publication was in 2023. Such adaptations are inevitable, because AI technology is still ongoing.

That is why there is a plan to conduct an uniform AI law portal, where everyone can check the newest update and fit it up to their own business regulations. Additionally, there will be availability to send complaints about AI systems that misused and affected their data.

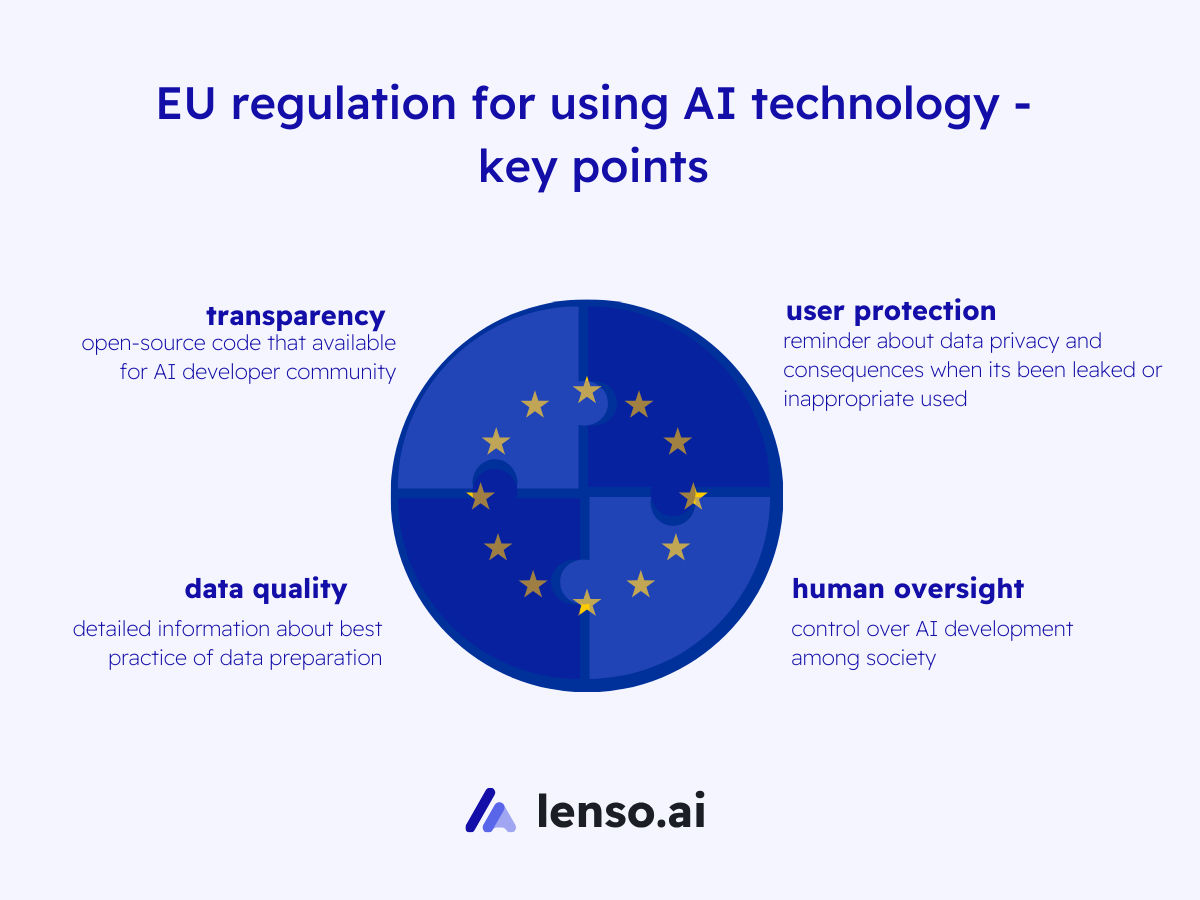

EU regulation for using AI technology - key points

Theoretically, EU regulation must include detailed explanation of proper using AI technology, such as:

- transparency: open-source code that available for AI developer community

- data quality: detailed information about best practice of data preparation

- user protection: reminder about data privacy and consequences when its been leaked or inappropriate used

- human oversight: control over AI development among society

- accountability: accept responsibility for actions and decisions

Beside that, there is a huge aspect of AI ethics and its basic requirements.

Also, the document should disclose 4 level risk of using AI technology:

- Unacceptable:

- Cognitive behavioral manipulation of people or specific vulnerable groups

- Social scoring: classifying people based on behavior, socio-economic status or personal characteristics

- Biometric categorisation of people

- High

AI systems that falling into specific areas that will have to be registered in an EU database:

- Management and operation of critical infrastructure

- Education and vocational training

- Employment, worker management and access to self-employment

- Access to essential private services and public services and benefits

- Law enforcement

- Migration, asylum and border control management

- Assistance in legal interpretation and application of the law.

- Limited

- Disclosing that the content was generated by AI

- Designing the model to prevent it from generating illegal content

- Publishing summaries of copyrighted data used for training

- Minimal

The law aims to offer start-ups and small and medium-sized enterprises opportunities to develop and train AI models before their release to the general public.

Additionally, each of the level risks must contain specific information/documentation about the evaluation process and examples of good practices such as:

- rigorous testing

- proper documentation

- clear business goals

- data protection

Consider whether the AI ethics is a necessity in a modern world?

Also, regulation may contain detailed instructions of AI implementation (“dos and don'ts”), that is compliant with EU principle law.

As soon as regulation will be on the final stage, the EU committee also plans to provide fees for companies/developers which misused AI technology or led to the society threatness on a massive scale.

What is next?

Firstly, regulation should be voted on and accepted in the EU committee. Unfortunately, such debates often last for months or even years. The next step contains regulation implementation in each European country.

After that, companies/developers will have 2 years to update their regulations and services to the new standards. And only after another 6 months, the government will check and ban AI services that did not apply for the new law.

Why should such regulation be provided?

First of all, currently AI technology is only partially regulated, and there are still some aspects that remain under the law. From one point of view, it opens a bunch of possibilities for developers. But unfortunately, in the wrong hands such freewill could be used as a weapon. And it could put at risk the whole process of data protection.

Apart from that, the following statement should be considered:

- Protection of fundamental human rights: privacy, non-discrimination, and autonomy.

- Safety and reliability: regulation can establish standards and requirements for the safety and reliability of AI systems.

- Transparency and accountability: requirements for explaining AI decision-making processes, disclosing data sources and training methods, and assigning responsibility for AI-related outcomes.

- Ethical considerations: AI raises various ethical concerns, including issues related to fairness, bias, accountability, and the impact on jobs and society.

- Promotion of innovation and competitiveness: clear and consistent regulation can provide certainty and confidence to businesses and consumers. It can also help ensure a level playing field for businesses operating in the EU market.

- Global leadership: by developing and implementing robust regulation for AI, the EU can position itself as a global leader in responsible AI governance.

Continue reading

General

The Best Platforms for AI-Powered Commercial Real Estate Search

The way people search for commercial real estate hasn't just evolved - it's been disrupted. For years, the process looked roughly the same: browse a listing platform, call a broker, wait for information that may or may not be current, and piece together a decision from fragmented sources. It worked, after a fashion. It was also slow, inefficient, and heavily dependent on relationships that not everyone had access to.

General

Reverse Image Search for Marketers: Best Use Cases and Benefits

Reverse image search can be helpful in various ways and across industries, especially in the marketing field. How exactly can you use image search technology and benefit from it?

General

Selecting the Best Residential Proxy for AI Image Search in 2026

The 2026 web is driven by visual discovery. Most modern platforms now have multi-type inputs. It's like searching with a text query is an old relic. In today's world, users expect quick and accurate results from high-quality AI image search. With this change, developers have to reimagine the way they collect public data. Millions of clean samples from all over the world are necessary for good training. Typical datacenter connections simply can't keep up with these demanding requirements. For large-scale visual work, only a top-tier residential proxy can do the job. This guide examines the top tools for developers interested in visual discovery's next generation.

General

The Hidden Layer of Digital Marketing: Using Image Intelligence to Safeguard Brand Reputation

Picture the following scenario: You’ve run a campaign that yields solid results. The copy is tight, the targeting’s dialed in, and your brand voice is consistent across every channel. But then you notice that someone lifted your hero product image (the one your team spent three weeks perfecting) and it’s now sitting on a sketchy marketplace, selling something that definitely isn’t yours.

.png?tr=w-768,h-auto)

General

What E-commerce Brands Need to Upgrade Before AI Search Changes Shopping

Right now, you could open ChatGPT, type "best waterproof running shoes under $120", and get three specific recommendations with direct links, bypassing Google search results entirely. The future of e-commerce is starting to look exactly like that. If you run an online store, now is the time to optimize it for AI search and prepare it for users who might discover your brand this way.